Engineers at the Tokyo Establishment of Innovation (Tokyo Tech) have shown a straightforward computational methodology for further developing the way man-made consciousness classifiers, like brain organizations, can be prepared in view of restricted measures of sensor information. The arising uses of the Web of Things frequently require edge gadgets that can dependably arrange ways of behaving and circumstances in view of time series.

In any case, preparing information are troublesome and costly to obtain. The proposed approach vows to significantly expand the nature of classifier preparing, at basically no additional expense.

As of late, the possibility of having gigantic quantities of Web of Things (IoT) sensors unobtrusively and constantly checking innumerable parts of human, normal, and machine exercises has made progress. As our general public turns out to be increasingly more eager for information, researchers, architects, and tacticians progressively trust that the extra knowledge which we can get from this unavoidable observing will work on the quality and effectiveness of numerous creation processes, likewise bringing about superior maintainability.

The world wherein we live is extraordinarily complicated, and this intricacy is reflected in a tremendous huge number of factors that IoT sensors might be intended to screen. Some are regular, like how much daylight, dampness, or the development of a creature, while others are counterfeit, for instance, the quantity of vehicles passing through an intersection or the strain applied to a suspended design like an extension.

What these factors all share practically speaking is that they advance over the long run, making what is known as time series, and that significant data is supposed to be contained in their tenacious changes. Generally speaking, specialists are keen on characterizing a bunch of foreordained conditions or circumstances in light of these worldly changes, as an approach to decreasing how much information and making it more clear.

For example, estimating how regularly a specific condition or circumstance emerges is many times taken as the reason for distinguishing and understanding the beginning of breakdowns, contamination increments, etc.

A few kinds of sensors measure factors that in themselves change gradually over the long run, like dampness. In such cases, it is feasible to send every individual perusing a remote organization to a cloud server, where the examination of a lot of collected information happens. In any case, an ever increasing number of uses require estimating factors that change rather rapidly, for example, the speed increases following the way of behaving of a creature or the day to day action of an individual.

Since numerous readings each second are frequently required, it becomes unfeasible or difficult to send the crude information remotely, because of constraints of accessible energy, information charges, and, in far off areas, transmission capacity. To avoid this issue, designs all around the world have for some time been searching for smart and proficient ways of pulling parts of information investigation away from the cloud and into the sensor hubs themselves.

This is many times called edge man-made reasoning, or edge simulated intelligence. Overall terms, the thought is to send remotely not the crude accounts, but rather the consequences of a grouping calculation looking for specific circumstances or circumstances of premium, bringing about a significantly more restricted measure of information from every hub.

There are, nonetheless, many difficulties to confront. Some are physical and come from the need to fit a decent classifier in what is normally a fairly restricted measure of room and weight, and frequently making it run on a tiny measure of force with the goal that long battery duration can be accomplished.

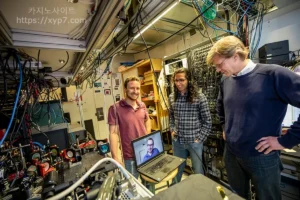

“Great designing answers for these necessities are arising consistently, however the genuine test keeping down some true arrangements is really another. Grouping precision is many times simply not sufficient, and society requires solid solutions to begin confiding in an innovation,” says Dr. Hiroyuki Ito, top of the Nano Detecting Unit where the review was led.

“Numerous excellent utilizations of man-made brainpower, for example, self-driving vehicles have shown that how great or poor a fake classifier is, relies intensely upon the nature of the information used to prepare it. Yet, generally, sensor time series information are truly requesting and costly to procure in the field. For instance, taking into account steers conduct checking, to gain it engineers need to invest energy at ranches, instrumenting individual 온라인카지노 cows and having specialists persistently clarify their conduct in light of video film,” adds co-creator Dr. Korkut Kaan Tokgoz, previously part of a similar exploration unit and presently with Sabanci College in Turkey.

As a result of the way that preparing information is so valuable, engineers have begun taking a gander at better approaches for making the most out of even a seriously restricted measure of information accessible to prepare edge computer based intelligence gadgets. A significant pattern in this space is utilizing strategies known as “information expansion,” wherein a few controls, considered sensible in light of involvement, are applied to the recorded information to attempt to emulate the changeability and vulnerability that can be experienced in genuine applications.

“For instance, in our past work, we reenacted the capricious turn of a collar containing a speed increase sensor around the neck of an observed cow, and found that the extra information produced in this manner could truly work on the exhibition in conduct characterization,” makes sense of Ms. Chao Li, doctoral understudy and lead creator of the review.

“In any case, we likewise understood that we wanted a considerably more broad way to deal with enlarging sensor time series, one that could on a basic level be utilized for any sort of information and not make explicit presumptions about the estimation condition. In addition, in genuine circumstances, there are really two issues, related however particular. The first is that the general measure of preparing information is much of the time restricted. The second is that a few circumstances or conditions happen substantially more much of the time than others, and this is undeniable. For instance, cows normally invest significantly more energy resting or ruminating than drinking.”

“However, precisely estimating the less regular ways of behaving is very fundamental to pass judgment on the government assistance status of a creature appropriately. A cow that doesn’t drink will certainly surrender, despite the fact that the exactness of characterizing drinking might humble affect normal preparation approaches because of its unique case. This is known as the information unevenness issue,” she adds.

Read: Most Common MacBook Issues And Solutions

The computational exploration carried out by the specialists at Tokyo Tech and at first designated at further developing steers conduct checking offers a potential answer for these issues, by consolidating two totally different and integral methodologies. The first is known as testing, and comprises of separating “bits” of time series relating to the circumstances to be arranged continuously beginning from various and irregular moments.

The number of bits that are extricated is changed cautiously, guaranteeing that one generally winds up with roughly similar number of pieces across every one of the ways of behaving to be grouped, paying little mind to how normal or interesting they are. This outcomes in a more adjusted dataset, which is positively ideal as a reason for preparing any classifier like a brain organization.

Since the method depends on choosing subsets of genuine information, it is protected as far as staying away from the age of the ancient rarities which might come from falsely orchestrating new bits to compensate for the less addressed ways of behaving. The subsequent one is known as substitute information, and includes an exceptionally hearty mathematical strategy to create, from any current time series, quite a few new ones that safeguard a few key highlights, however are totally uncorrelated.

“This temperate mix ended up being vital, on the grounds that testing might cause a ton of duplication of similar information, when certain ways of behaving are excessively intriguing contrasted with others. Proxy information are rarely something similar and forestall this issue, which can adversely influence the preparation interaction. What’s more, a vital part of this work is that the information expansion is incorporated with the preparation interaction, thus, various information are constantly introduced to the organization all through its preparation,” makes sense of Mr. Jim Bartels, co-creator and doctoral understudy at the unit.

Substitute time series are produced by totally scrambling the periods of at least one signs, accordingly delivering them absolutely unrecognizable when their progressions after some time are thought of. Be that as it may, the dissemination of values, the autocorrelation, and, on the off chance that there are different signs, the crosscorrelation, are impeccably saved.

“In another past work, we found that numerous observational tasks, for example, turning around and recombining time series really assisted with further developing preparation. As these tasks change the nonlinear substance of the information, we later contemplated that the kind of direct elements which are held during proxy age are presumably key to execution, essentially for the utilization of cow conduct acknowledgment that I center around,” further makes sense of Ms. Chao Li.

Read: How the Creative and Creativity Can Help Drive Your Advertising Effectiveness

“The technique for substitute time series begins from an altogether unique field, specifically the investigation of nonlinear elements in complex frameworks like the cerebrum, for which such time series are utilized to assist with recognizing turbulent way of behaving from commotion. By uniting our various encounters, we immediately understood that they could be useful for this application, as well,” adds Dr. Ludovico Minati, second creator of the review and furthermore with the Nano Detecting Unit.

“In any case, extensive mindfulness is required in light of the fact that no two application situations are ever something very similar, and what turns out as expected for the time series reflecting cow ways of behaving may not be substantial for different sensors observing various kinds of elements. Anyway, the class of the proposed strategy is that it is very fundamental, basic, and nonexclusive. Subsequently, it will be simple for different analysts to rapidly give it a shot on their particular issues,” he adds.

After this meeting, the group made sense of that this sort of examination will be applied as a matter of some importance to working on the characterization of cows ways of behaving, for which it was at first planned and on which the unit is directing multidisciplinary research in organization with different colleges and organizations.

“One of our fundamental objectives is to effectively exhibit high exactness on a little, modest gadget that can screen a cow over its whole lifetime, permitting early recognition of infection and hence truly working on animal government assistance as well as the proficiency and supportability of cultivating,” closes Dr. Hiroyuki Ito.